Imagine trying to talk to a friend or colleague in a crowded, noisy room. It can be difficult to follow every word, but the human brain is often able to focus on one speaker while pushing other voices and background noise aside. Hearing aids, however, do not always manage that. They can amplify speech quite well, but in noisy social settings they may also amplify the voices a listener is trying to ignore.

That challenge, known as the “cocktail party effect,” has shaped years of research by Columbia University’s Dr. Nima Mesgarani into auditory attention decoding. Put simply, that means using brain activity to identify which speaker someone is trying to hear.

In 2012, Dr. Mesgarani and Dr. Edward Chang showed that brain activity could reveal which speaker someone was trying to hear when two people were talking at once.

That finding helped pave the way for later work, published in Nature Neuroscience, in which researchers at Columbia University’s Zuckerman Institute reported that brain-controlled technology improved speech perception in early human studies by detecting which speaker a listener was focusing on and reducing competing speech in real time.

“We have developed a system that acts as a neural extension of the user, leveraging the brain’s natural ability to filter through all the sounds in a complex environment to dynamically isolate the specific conversation they wish to hear,” Dr. Mesgarani said.

“This science empowers us to think beyond traditional hearing aids, which simply amplify sound, toward a future where technology can restore the sophisticated, selective hearing of the human brain,” he added.

The system, however, is not close to becoming an ordinary hearing aid.

The study, which involved epilepsy patients who already had electrodes implanted in their brains for clinical monitoring, gave the researchers access to brain signals that are far more precise than those available from non-invasive sensors.

How the new system worked

In the new study, the researchers worked with epilepsy patients who were already undergoing brain monitoring.

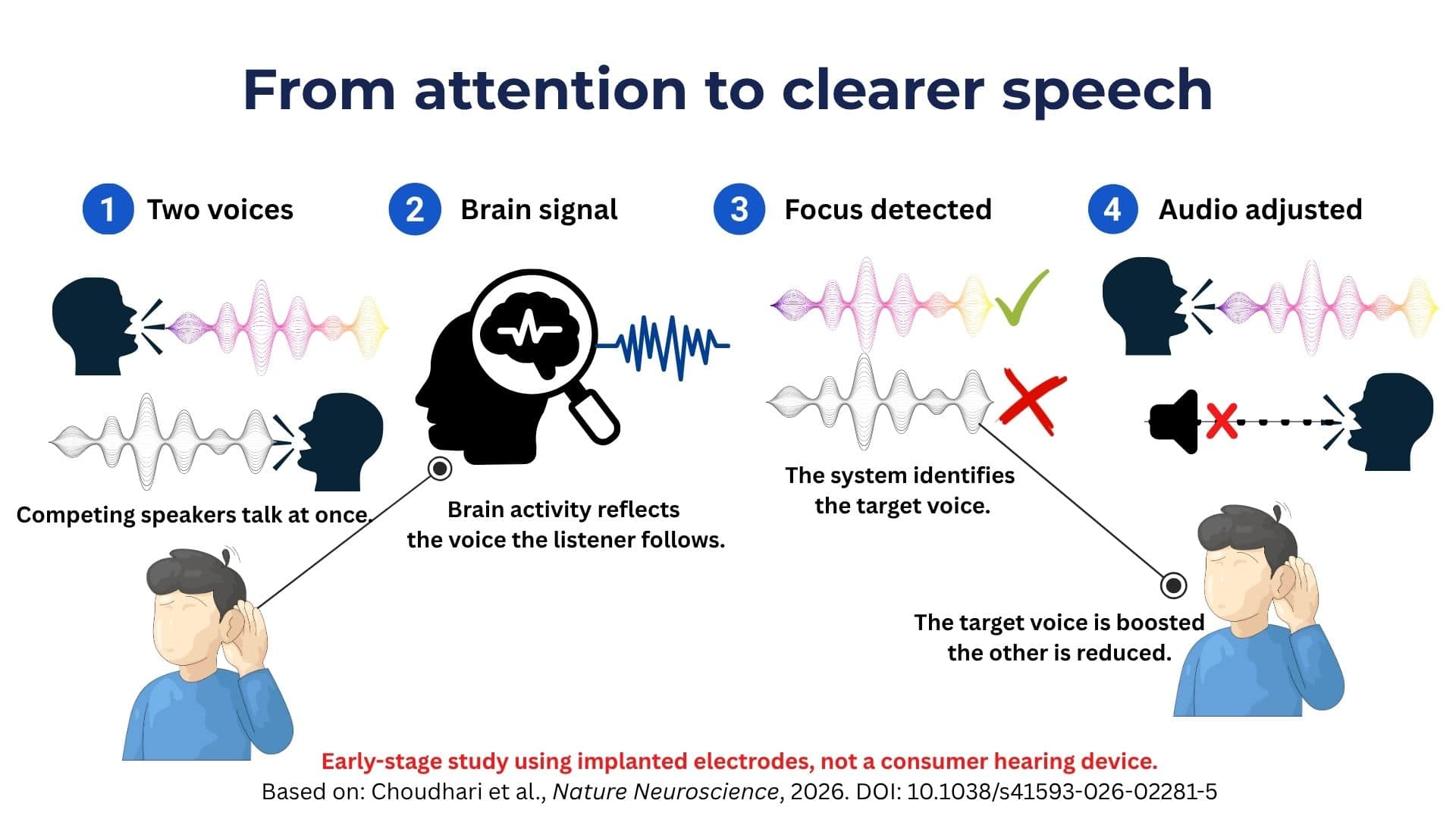

The system played two overlapping conversations at the same time. The listener was asked to focus on one of them, while the implanted electrodes recorded brain activity linked to speech attention.

Machine-learning algorithms then compared the listener’s brain signals with the speech patterns of the two speakers. When the system detected which voice the listener was attending to, it increased the volume of that conversation and reduced the competing speech in real time.

The important point is that the listener did not have to press a button or manually choose a speaker. The system used brain activity to infer attention. That distinction is what makes the research notable. Conventional hearing aids try to improve the sound entering the ear. This system tries to work out which sound the listener actually wants to hear.

“The central unanswered question was whether brain-controlled hearing technology could move beyond incremental advances, towards a prototype that could help someone hear better in real time,” said Vishal Choudhari, the paper’s first author and a former PhD student in Dr. Mesgarani’s lab.

“For the first time, we have shown that such a system that reads brain signals to selectively enhance conversations can provide a clear real-time benefit. This moves brain-controlled hearing from theory toward practical application,” he added.

Why the research matters for hearing technology

The findings could matter for future hearing technologies. A device that can tell which speaker someone wants to hear could help solve one of the biggest problems in everyday hearing: following a conversation when several people are talking at once.

That does not mean a commercial product is close. The Columbia system relied on implanted electrodes, which are invasive and not suitable for ordinary hearing-aid users. For the research to move beyond the lab, scientists need to show that similar attention signals can be detected through less invasive methods, such as scalp-based or ear-based sensors.

The commercial appeal is easy to understand: helping people follow speech in noisy places. Better microphones, noise reduction and AI audio processing have improved hearing devices, but they still do not always know which voice the user actually wants to hear.

Sources:

Columbia University’s Zuckerman Institute – “Brain-Controlled Hearing System Proves Itself in First Human Studies”

Choudhari, V., Nentwich, M., Johnson, S. et al. Real-time brain-controlled selective hearing enhances speech perception in multi-talker environments. Nat Neurosci (2026). https://doi.org/10.1038/s41593-026-02281-5