DeepMind, a world leader in artificial intelligence (AI) research, announced a collaboration with the US video game developer Blizzard Entertainment to open up the real-time strategy game ‘StarCraft II’ to AI and Machine Learning.

DeepMind, a London based company acquired by Google in 2014, made headlines earlier this year when its AlphaGo program beat a human professional Go (strategy board game) player for the first time.

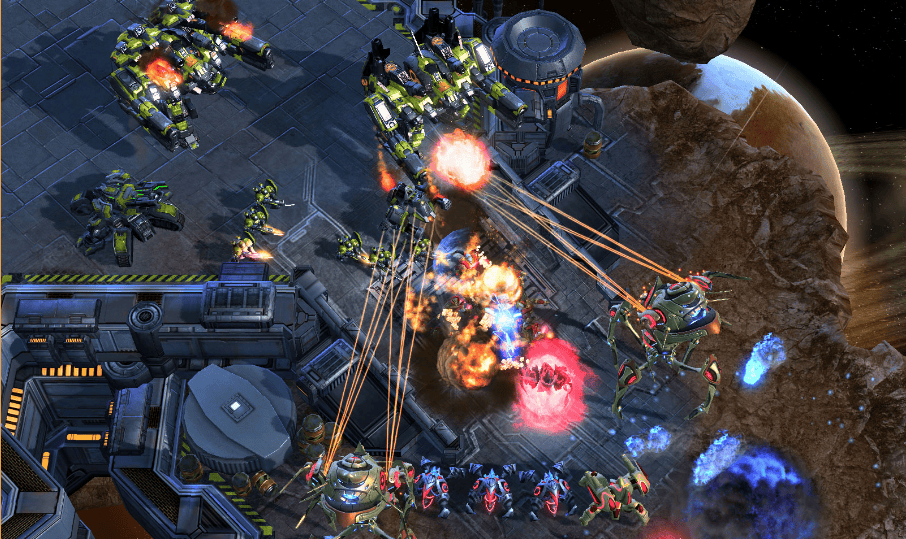

Its focus is now on the popular RTS game StarCraft II.

“We’ve worked closely with the StarCraft II team to develop an API that supports something similar to previous bots written with a “scripted” interface, allowing programmatic control of individual units and access to the full game state,” said Oriol Vinyals, Research Scientist at DeepMind, in an official blog post.

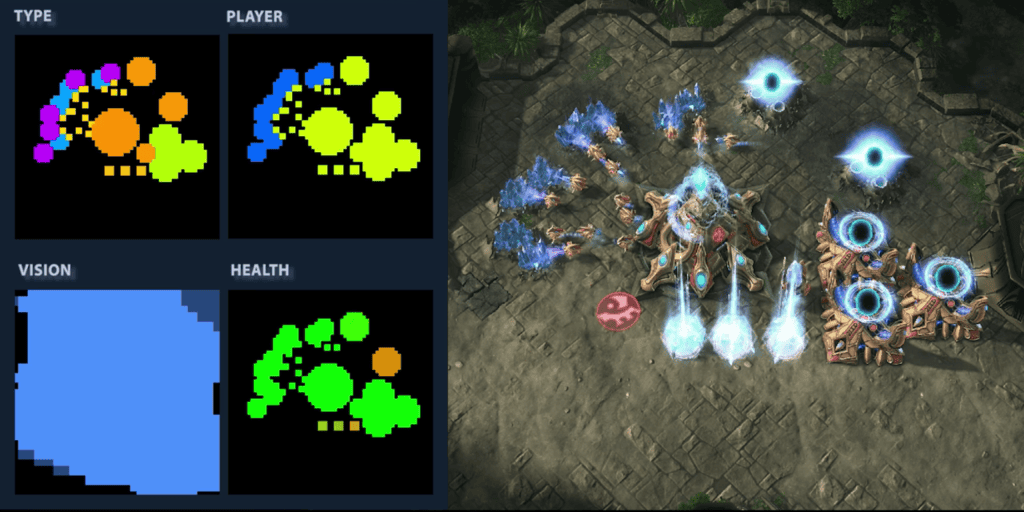

“Ultimately agents will play directly from pixels, so to get us there, we’ve developed a new image-based interface that outputs a simplified low resolution RGB image data for map & minimap, and the option to break out features into separate “layers”, like terrain heightfield, unit type, unit health etc. Below is an example of what the feature layer API will look like.”

Demis Hassabis, Co-Founder & CEO, DeepMind, said he’s excited to be working on bringing StarCraft II to the AI research community:

Super-excited to be working w/@Blizzard_Ent to bring Starcraft II to the AI research community. Starcraft one of my all time favourite games https://t.co/2PRIwQMfAz

— Demis Hassabis (@demishassabis) November 4, 2016

Why did DeepMind choose StarCraft?

The reason DeepMind decided to work with the game StarCraft as a testing environment comes down to it providing “a useful bridge to the messiness of the real world.”

“The skills required for an agent to progress through the environment and play StarCraft well could ultimately transfer to real-world tasks.”

Unlike perfect information games such as Chess or Go, StarCraft offers a partially observable environment, with players having to send their units to explore unseen areas as a means of gaining information about their opponent, and then remember that information over a long period.

This type of environment will help AI researchers test reinforcement learning techniques, which involves getting an agent to act in the world so as to maximize its rewards by remembering and avoiding the mistakes it’s made in the past.

“An agent that can play StarCraft will need to demonstrate effective use of memory, an ability to plan over a long time, and the capacity to adapt plans based on new information. Computers are capable of extremely fast control, but that doesn’t necessarily demonstrate intelligence, so agents must interact with the game within limits of human dexterity in terms of “Actions Per Minute”,” Vinyals said in the blog post.

Adding: “StarCraft’s high-dimensional action space is quite different from those previously investigated in reinforcement learning research; to execute something as simple as “expand your base to some location”, one must coordinate mouse clicks, camera, and available resources.”

Blizzard intends to improve its own games using the findings

“Is there a world where an AI can be more sophisticated, and maybe even tailored to the player?” Blizzard’s Chris Sigaty, executive producer of StarCraft II, told The Guardian. “Can we do coaching for an individual, based on how we teach the AI? There’s a lot of speculation on our side about what this will mean, but we’re sure it will help improve the game.”

To release ‘sometime in the first quarter’ of 2017

According to a report by Polygon,

“Researchers interested in using the RTS game to test how AI responds to it will be able to do so early next year. Blizzard is working on modifications for the game that will allow researchers to build systems specifically for the purpose of learning to play StarCraft 2. Those modifications are expected to be ready for release sometime within the first quarter.”