What is a Generative Pre-trained Transformer (GPT)?

From the voice that tells us the weather forecast on Siri to Google Assistant reminding us of our meetings, Natural Language Processing (NLP) is everywhere. A star player in this revolution is the Generative Pre-trained Transformer (GPT).

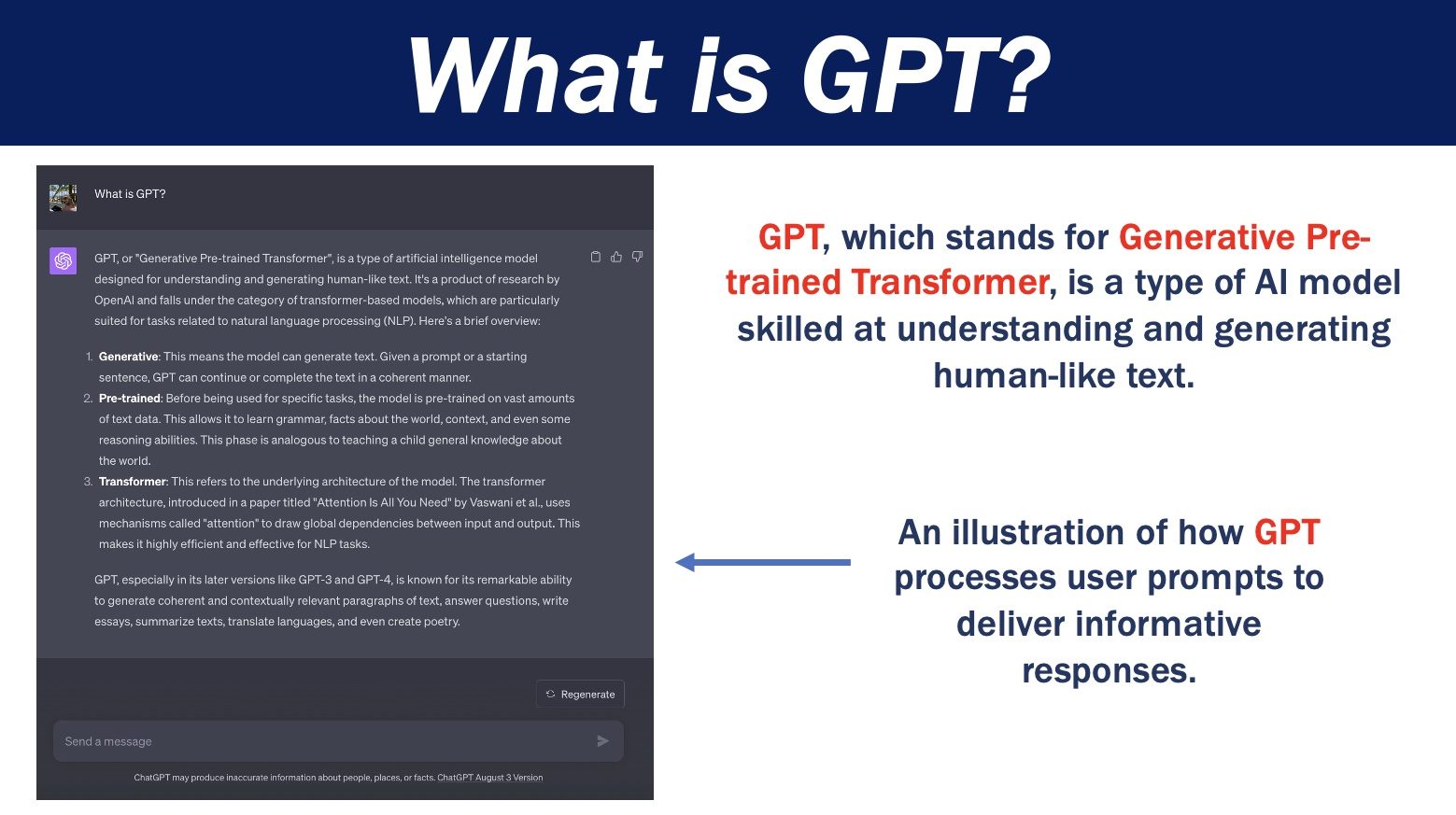

Amazon Web Services provides the following definition of GPT:

“GPT, short for Generative Pre-trained Transformers, harnesses the transformer architecture, marking a pivotal leap in AI and driving generative applications like ChatGPT.. GPT models give applications the ability to create human-like text and content (images, music, and more), and answer questions in a conversational manner.”

1. What are Transformers?

Basic Idea: Imagine a machine attempting to decipher the complex nature of human language, a form of communication that frequently shifts based on context. In 2017, Vaswani and his colleagues made a groundbreaking contribution to the world of artificial intelligence with their paper titled “Attention Is All You Need”. This introduced the Transformer architecture, which has since played a foundational role in many advanced NLP models.

Mechanism: At the core of the Transformer’s prowess is the “self-attention” mechanism. Here’s how it works:

- Self-Attention: This mechanism allows the model to consider other words in a sentence when encoding a particular word. For example, in the sentence “He threw the apple,” the word “threw” has more relevance to “apple” than to “he.” The self-attention mechanism lets the model weigh the importance of each word in relation to others.

- Parallel Processing: Unlike previous sequential models (like RNNs) which processed words one after the other, Transformers can process all words in a sentence simultaneously. This allows for faster training times and better capturing of long-range dependencies in a sentence.

- Positional Encoding: Since the Transformer processes words in parallel, it doesn’t have an inherent sense of word order (or position). To address this, Transformers add a positional encoding to each word embedding, ensuring the model knows the position of each word in a sequence.

Using these mechanisms, a Transformer can understand nuances in meaning based on context. For instance, it can discern that “Apple” in a sentence about technology refers to the company, while in a sentence about fruit, it refers to the edible item.

2. Pre-training and Fine-tuning:

Pre-training:

- Language Modeling Objective: At this stage, GPT and similar models are typically trained using a language modeling objective. They predict the next word in a sequence given the preceding words, much like filling in the blanks in a sentence.

- Massive Textual Datasets: For pre-training, models are exposed to massive datasets like books, articles, and websites. This vast amount of data provides a rich tapestry of language patterns, idioms, facts, and reasoning contexts.

- Transfer Learning: The key idea behind pre-training is transfer learning. By learning from vast amounts of data, the model builds a foundational understanding of language, which can then be transferred to more specific tasks with smaller amounts of data.

Fine-tuning:

- Task-Specific Data: For fine-tuning, the model is trained on data specific to a particular task, be it sentiment analysis, translation, or question-answering. This data is usually labeled, meaning it comes with correct answers or outcomes to guide the model.

- Adapting to the Task: During fine-tuning, the model adjusts its previously learned weights to better suit the specific task. It’s akin to a general practitioner in medicine specializing in cardiology; they use their broad medical knowledge but refine it to become an expert in heart-related matters.

- Efficiency: One of the beauties of fine-tuning is that, because the model has already been pre-trained on a vast amount of data, it often requires much less data to adapt to a specific task than if it were trained from scratch.

By combining pre-training and fine-tuning, models like GPT leverage both the broad knowledge of language and the specific nuances of a task, achieving impressive results across a range of applications.

3. Generative Aspect of GPT:

Generative Models:

- Inspiration from Data: Just as artists derive inspiration from the world around them, GPT gets its ‘inspiration’ from the massive amounts of text data it’s been trained on. By recognizing patterns, structures, and contexts from this data, it can generate entirely new sentences, paragraphs, or even essays.

- Imitation and Innovation: At its core, GPT is designed to imitate the language structures it has seen before. However, its advanced algorithms and vast training data also allow it to “innovate” by combining patterns in unique ways, producing outputs that are not mere replicas but fresh, coherent compositions.

Applications:

- Chatbots: Ever chatted with an online customer support bot? There’s a good chance that behind those helpful responses is a model like GPT, efficiently handling queries and providing information.

- Content Creation: Bloggers, marketers, and even students are using tools powered by GPT to help brainstorm ideas, generate content, or even write essays. These tools can provide suggestions, expansions, or even entirely original pieces of content.

- Storytellers: Fancy a sci-fi tale or a romantic short story? GPT can weave narratives based on prompts, generating stories that range from fun and whimsical to surprisingly deep and meaningful.

- Educational Assistance: Beyond just crafting narratives, GPT models can also assist students in understanding complex topics, breaking down subjects into simpler, digestible explanations, or even quizzing them to reinforce learning.

By understanding the nuances and patterns of language, GPT stands at the forefront of AI-driven content generation, influencing industries from customer support to education and entertainment.

4. Evolution of GPT:

GPT-1: The Beginning

- Introduction: When GPT-1 was introduced, it was already a marvel in the field of natural language processing. With 117 million parameters (a measure of its complexity), it demonstrated the potential of Transformer architectures for language tasks.

- Capabilities: It could generate coherent paragraphs and showed promise in multiple tasks without task-specific training. However, it was just the tip of the iceberg.

GPT-2: Making Headlines

- Bigger and Better: GPT-2 was a leap forward with a whopping 1.5 billion parameters. This enhanced size allowed it to generate more coherent and longer passages of text.

- Caution: Its capability was so pronounced that OpenAI initially hesitated to release the full model, fearing misuse due to its ability to generate fake news or misleading information.

GPT-3: The Revolution

- A Giant: With 175 billion parameters, GPT-3 was a behemoth compared to its predecessors. It could produce essays, answer questions, write poetry, and even create basic computer code.

- Fine-Tuning: The vast size of GPT-3 meant it had a broad knowledge base, and with minimal fine-tuning, it could excel at specific tasks, opening doors to various commercial applications.

GPT-4: The Latest Evolution (as of this writing)

- Even Larger: While the exact size and capabilities are proprietary, what’s known is that GPT-4 is even larger and more capable than GPT-3.

- Broader Applications: GPT-4 pushes the envelope in diverse areas, from assisting researchers in scientific endeavors to powering more advanced chatbots and virtual assistants.

Continuous Growth: The progression from GPT-1 to GPT-4 is emblematic of our relentless pursuit of knowledge and technological advancement. With each version, GPT became more versatile, understanding the nuances of language and context with increasing finesse.

5. Strengths of GPT:

Flexibility: Adaptability is Key

- Versatile Tool: GPT isn’t confined to just one role. Picture it as a Swiss Army knife of the AI world. Whether it’s understanding a question, translating languages, or crafting a story, GPT is up for the challenge without needing a complete overhaul.

- Streamlined Approach: This adaptability means that for those using it, they don’t have to reinvent the wheel each time they face a new task. GPT can be adjusted and fine-tuned, making it a go-to tool for diverse challenges.

Performance: A Benchmark Setter

- Raising the Bar: In many tasks, GPT goes beyond just getting the job done. It excels, often surpassing what humans can achieve, and delivers results that are faster and more accurate.

- High Achiever: GPT has been put to the test against various standards in the world of NLP. More often than not, it sets new records, showcasing its prowess and setting a high bar for others to meet.

Scalability: Growing in Stature and Skill

- Leveling Up: As GPT evolves, it’s akin to upgrading to a newer, more advanced version of your favorite app or game. With each version, as it becomes more complex, its abilities and performance see noticeable enhancements.

- Forward Momentum: The fascinating part is seeing this consistent growth. As the model expands and learns, its capability to handle tasks and challenges magnifies, leaving us eager to see its next iteration.

6. Challenges and Criticisms:

Computational Requirements: It’s Not Your Everyday Laptop Task

- High-Tech Gear Needed: Think of training GPT as attempting to stream multiple high-definition movies simultaneously on a basic tablet. It would struggle, right? Training GPT, especially the beefed-up newer versions, is similar. It demands top-of-the-line, advanced computer setups, akin to the supercomputers used for scientific research.

- Power-Hungry Process: Just like how running multiple apps at once can quickly drain your phone or laptop battery, training and running these models requires tons of electricity. That’s not just costly, but also has environmental considerations.

Ethical Concerns: Handle with Care

- Potential for Misuse: GPT is incredibly adept at creating text. But in the wrong hands, this could mean generating misleading info or fake news that’s hard to tell apart from real articles or statements. It’s like having a superpower that can either save the day or wreak havoc.

- Who’s to Blame?: If something goes wrong due to GPT’s content, who takes the fall? The people who made it? The ones using it? Or is it a tech issue? This is a challenging question without clear answers yet.

Model Bias: Echoes from the Web

- Mimicking the Good and the Bad: Since GPT learns from content on the internet, it might sometimes echo biased or inaccurate views. It’s a bit like believing everything you read online without fact-checking; sometimes, you end up with wrong or skewed beliefs.

- Ironing Out the Wrinkles: The goal is to refine GPT so it can provide balanced and fair outputs. But perfecting this is tough. As it stands, GPT can occasionally produce content that raises eyebrows.

Conclusion

The world is quickly evolving, and at its forefront is the impressive power of artificial intelligence, with GPT shining as a prime example. Think of it like the early days of the internet or smartphones: we’re witnessing something that could reshape our future in profound ways.

The GPT models and their “transformer” family have so much potential, it’s like a vast ocean yet to be fully explored. As technology keeps advancing, we might see GPT combining with other tech – like virtual reality, smart devices, or even healthcare. Imagine a world where your virtual assistant doesn’t just answer questions, but perhaps even anticipates your needs, or helps doctors with complex medical diagnoses.

However, with great power comes great challenges. It’s not just about making tech smarter; it’s about ensuring it’s used wisely. As GPT grows, we, as a society, will need to decide how and where to use it. Ethical considerations – like fairness, preventing misuse, and understanding the consequences of AI-generated content – will be as crucial as the technological advancements themselves.

So, while the future of GPT and similar technologies is hugely exciting, it’s a journey that requires a thoughtful mix of innovation, responsibility, and a deep understanding of what we, as a society, want from our tech.