An artificial intelligence called AlphaGo stunned experts when it won against a grand master five consecutive times in the toughest game in the world. The ancient Chinese board game, called ‘GO’ (围棋), which is more than 2,500 years old, is considerably tougher than chess because there are so many more ways – 10 to the power of 170 to be precise – that a match can play out. GO is known as Wei Qi in China.

AlphaGo, an artificial intelligence created by Google DeepMind, a British artificial intelligence company (DeepMind Technologies) that was acquired by Google in 2014, beat a GO human grand master 5 games to nil.

According to the American GO Association:

“GO is an ancient board game which takes simple elements: line and circle, black and white, stone and wood, combines them with simple rules and generates subtleties which have enthralled players for millennia. GO’s appeal does not rest solely on its Asian, metaphysical elegance, but on practical and stimulating features in the design of the game.”

Competitors in last year’s world championship in Thailand. Over 40 million people are avid GO players. (Image: International Go Federation Facebook)

Competitors in last year’s world championship in Thailand. Over 40 million people are avid GO players. (Image: International Go Federation Facebook)

An independent expert who was there was astonished. He called it a colossal breakthrough for AI (artificial intelligence) “potentially with far-reaching consequences.”

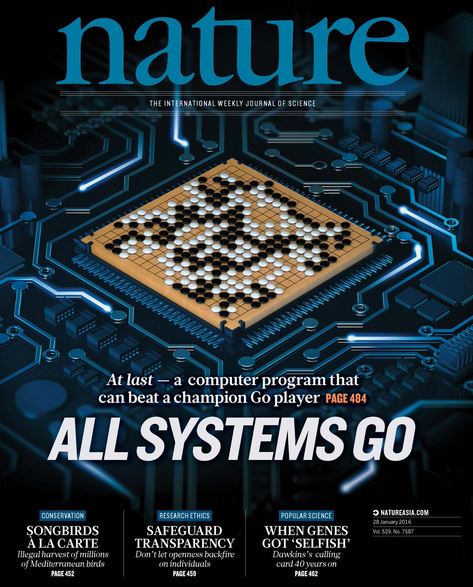

AlphaGo, in fact, reached this remarkable milestone in October 2015, but its creators waited until this week to make the announcement so that it could coincide with an article published in the prestigious academic journal Nature (reference at bottom of page).

A game that requires human intuition and gut feeling

The revered Chinese philosopher, teacher, politician, and editor, Confucius (551 – 479 BC), once wrote about GO. In classical Chinese culture, a true scholar should be skilled in four essential arts, one of them is being able to play GO.

There are more than forty million GO enthusiasts across the world, and the rules are fairly simple. There are two players – each one takes turns to place black or white stones on a board. The aim is to try to capture your adversary’s stones or block each empty space by surrounding it.

Avid players say GO thinking is more lateral than linear, and much more dependent on a ‘feel’ for the stones, a ‘sense’ of shape, and gestalt perception of the game than logical deduction. ‘Gestalt’ refers to the perception of oneness from many.

It is extraordinary that an artificial intelligence managed to beat a human at our own game. One would think that our intuition and gut feel would put AlphaGo at a disadvantage. This was not the case.

A father teaching his kids how to play GO in Old Japan. The game has been popular in many parts of Asia for a very long time. (Image: asiasociety.org)

A father teaching his kids how to play GO in Old Japan. The game has been popular in many parts of Asia for a very long time. (Image: asiasociety.org)

Even though it is a game with simple rules, playing it well requires enormous skill – it is a game of profound complexity. The game has 10170 ways it can play out; that is more than all the atoms that exist in the whole Universe!

It is this complexity and reliance on human-related instincts that AI scientists thought would make it super-hard for an AI to play the game GO, and therefore an enticing challenge for AlphaGo.

AI’s use games as testing grounds or field studies where they can create smart and flexible algorithms that can resolve problems – often in ways eerily similar to the how humans do it.

From basic games to the toughest of them all

An AI mastered the game of Noughts and Crosses (Tic-Tac-Toe) in 1952 – this was the first time an artificial entity ever conquered a game. Then another AI mastered Checkers (UK: Draughts). In 1997, the Russian World Chess Champion Garry Kasparov was beaten by the Deep Blue IBM supercomputer.

Demis Hassabis, co-founder of Google DeepMind. In his website he writes: “Before embarking on my research career, I was previously a well-known UK videogames designer and AI programmer.” (Image: twitter.com/demishassabis)

Demis Hassabis, co-founder of Google DeepMind. In his website he writes: “Before embarking on my research career, I was previously a well-known UK videogames designer and AI programmer.” (Image: twitter.com/demishassabis)

Artificial intelligences have also competed and won in other activities apart from games. In 2011, two human champions were beaten at Jeopardy by Watson, a question-answering computer system able to answer questions posed in natural language, developed by IBM.

Google’s own algorithms learned to play several Atari games in 2014 – they just used raw pixel inputs as a data source.

However, everything ultra-pales in comparison to what AlphaGo has achieved. In the world of artificial intelligence, winning against a human GO champion is the equivalent of humans inventing the wheel.

Traditional AI methods would have been hopeless at playing GO. Traditional AIs build a search tree over all the possible alternatives, which means they would be stuck for literally decades going through the 10170 alternative outcomes.

The 1997 match between IBM’s supercomputer Deep Blue and Garry Kasparov was the first defeat of a reigning world chess champion to a computer under tournament conditions. The match was the subject of ‘The Man vs. The Machine’, a documentary film. (Images: Wikipedia)

The 1997 match between IBM’s supercomputer Deep Blue and Garry Kasparov was the first defeat of a reigning world chess champion to a computer under tournament conditions. The match was the subject of ‘The Man vs. The Machine’, a documentary film. (Images: Wikipedia)

AlphaGo uses a new AI approach

Co-founder of DeepMind, Demis Hassabis, a British neuroscientist, computer game designer, artificial intelligence researcher, world class gamer, and lead scientist of the team that created AlphaGo, said:

“So when we set out to crack Go, we took a different approach. We built a system, AlphaGo, that combines an advanced tree search with deep neural networks.”

“These neural networks take a description of the Go board as an input and process it through 12 different network layers containing millions of neuron-like connections. One neural network, the ‘policy network,’ selects the next move to play. The other neural network, the “value network,” predicts the winner of the game.”

The DeepMind team trained the neural networks on over thirty million moves from matches that had been played by GO champions, until the AI could predict the human move 57% of the time – easily beating its previous record of 44%.

AlphaGo’s victory made the front cover of the latest issue of Nature. (Image: pbs.twimg.com)

AlphaGo’s victory made the front cover of the latest issue of Nature. (Image: pbs.twimg.com)

However, they didn’t want it just to imitate the grand master, it had to learn how to beat them at their own game. To be able to do this, AlphaGo learned how to discover new strategies by itself, by literally playing tens of thousands of games between its neural networks, and adjusting the connections using an approach known as reinforcement learning, a kind of trial-and-error process in which an AI automatically determines the ideal behaviour within a specific context, in order to maximize its performance.

This required tons of computing power, which was made possible by using the Google Cloud Platform, the researchers said.

AlphaGo (the AI) and Google DeepMind (creator of the AI) logos. According to Wikipedia, The British artificial intelligence company, DeepMindTechnologies, was founded in 2010 by Demis Hassabis, Shane Legg and Mustafa Suleyman. Google bought it in 2014 and its name became Google DeepMind.

AlphaGo (the AI) and Google DeepMind (creator of the AI) logos. According to Wikipedia, The British artificial intelligence company, DeepMindTechnologies, was founded in 2010 by Demis Hassabis, Shane Legg and Mustafa Suleyman. Google bought it in 2014 and its name became Google DeepMind.

First win against programmes, then top humans

After all that intensive training, the researchers felt AlphGo was ready to be put to the test. It started off playing against other top programmes at the forefront of computer GO. It played a total of 500 games, and failed to win in just one of them.

Then AlphaGo was ready for the final test, the one that everybody was working towards – to see whether it could beat a top human GO player. Fan Hui, the reigning 3-time European GO champion, who had been playing for a living since he was 12 years old, was invited to come in, which he did.

Mr. Hui went to Google’s London offices for a challenge match against AlphaGO.

They played in October last year and AlphaGO did not disappoint – it won by 5 straight games (5 – 0). AlphaGO had made history; never before had an artificial intelligence beaten a top GO professional player.

Google DeepMind has issued a challenge to Lee Sedol 9P from South Korea, the world champion for most of the last 10 years, to play a 5-game, million-dollar match in March 2016.

The American GO Association quotes Myungwan Kim, a world-famous Grand Master in the world of GO, who said:

“I was shocked at how AlphaGo played. It played like a human professional. I am sad that this computer program might beat me, but I don’t think it can beat Lee Sedol.”

Prof. Stephen Hawking wonders what might happen if AI one day becomes more intelligent than us.

Prof. Stephen Hawking wonders what might happen if AI one day becomes more intelligent than us.

Is this milestone a blessing or the birth of a nightmare?

Is AlphaGo’s achievement a wonderful milestone or the beginning of humankind’s worst nightmare? Will AI, as it becomes more sophisticated, transform civilization and humans’ quality of life in a good way, or will it reach a level that poses a threat to us?

Might an AI that is far smarter than we are decide that we are not good for the planet, or conclude that we are our own worst enemy?

What would a super-clever artificial entity, one with an IQ two, three or four times greater than the world’s most intelligent human, think of the way we treat animals, each other, or our environment?

The University of Cambridge in England is launching a special centre to explore the pros and cons that AI will bring to humankind, thanks to a donation from the Levehulme Trust.

We are only barely scratching at the surface of what AI will one day be capable of. Computer science is advancing at lightning speed. AI will soon have the ability to analyze, learn and evolve on its own, at a far faster pace than humans evolve biologically.

Stephen Hawking’s & Elon Musk’s concern regarding AI

Renowned British theoretical physicists, cosmologist and author, Professor Stephen Hawking, has expressed concern about what AI might become one day. He worries that it could spell the end of our civilization and maybe even our very survival as a species.

Prof. Hawking once said:

“The development of full artificial intelligence could spell the end of the human race.”

Inventor, engineer, and entrepreneur Elon Musk, founder of a rocket firm and an electric car company, expressed his concerns regarding AI in an interview with the Guardian last year.

Mr. Musk said:

“I think we should be very careful about artificial intelligence. If I had to guess at what our biggest existential threat is, it’s probably that. So we need to be very careful. I’m increasingly inclined to think that there should be some regulatory oversight, maybe at the national and international level, just to make sure that we don’t do something very foolish.”

“With artificial intelligence we are summoning the demon. In all those stories where there’s the guy with the pentagram and the holy water, it’s like – yeah, he’s sure he can control the demon. Doesn’t work out.”

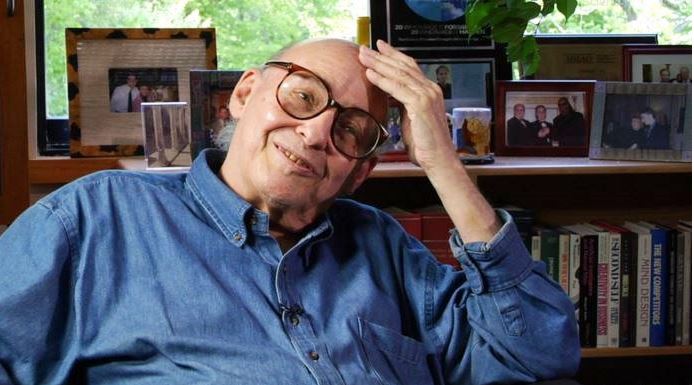

Prof. Marvin Minsky believed AI would be a wonderful thing for humankind.

Prof. Marvin Minsky believed AI would be a wonderful thing for humankind.

AI funding patchy

Artificial Intelligence funding over the past few years has been patchy. Researchers partly blame publicly-voiced concerns by well known people including Hawking, Musk and also Microsoft founder Bill Gates for scaring off investors.

Professor Marvin Minsky, known as the ‘father of artificial intelligence’, who died earlier this month, was becoming increasingly frustrated by the shortage of both AI funding and researchers.

Prof. Minsky was convinced that humans would one day develop machines as smart as humans. He thought AI would be a wonderful blessing for us all.

When asked when machines might become as intelligent as humans, Prof. Minsky replied:

“How long this takes will depend on how many people we have working on the right problems.”

Luddite of the year candidates

Stephen Hawking, Elon Musk and Bill Gates were nominated by ITIF (Information Technology & Innovation Foundation) for the embarrassing title of Luddite of the Year. The winner is the person deemed to have contributed the most towards killing the advance of new technology.

It is ironic, and perhaps slightly bizarre, that the three people at the cutting edge of science and technology, should be put forward as alleged killers of new tech by ITIF. They are not exactly technophobes.

Today, the word Luddite means a person who does not like scientific or technological progress. The Luddites were nineteenth century textile workers in Britain who protested against the use of new labour-saving devices that were coming onto the market.

According to an ITIF report, since the beginning of the nineteenth century, there has been widespread suspicion about evil machines in popular culture “and these claims continue to grip the popular imagination, in no small part because these apocalyptic ideas are widely represented in books, movies, and music.”

Reference: “Mastering the game of Go with deep neural networks and tree search,” Demis Hassabis, David Silver, Koray Kavukcuoglu, Aja Huang, Thore Graepel, Nal Kalchbrenner, Chris J. Maddison, Arthur Guez, John Nham, Laurent Sifre, Madeleine Leach, Dominik Grewe, Ilya Sutskever, George van den Driessche, Julian Schrittwieser, Ioannis Antonoglou, Marc Lanctot, Sander Dieleman, Timothy Lillicrap and Veda Panneershelvam. Nature 529, 484–489. 27 January 2016. DOI:10.1038/nature16961.

Nature Video – the computer that mastered GO

Comments are closed.