Scientists at Columbia University’s Data Science Institute are to present ‘Sunlight’, a second-generation tool that they say brings transparency to the Web. They will present the new tool at the Association for Computing Machinery’s annual conference on security in Denver, Colorado, on October 14th.

Navigating the Web is getting progressively easier as companies monitor our browsing habits and emails to fine-tune their algorithms that serve us tailored ads and recommendations.

This convenience, however, comes at a cost. In the wrong hands, our personal data could be used against us, to discriminate on health insurance and housing, and overcharge on goods and services. In the hands of very nasty people, the risks are even greater.

Roxana Geambasu, an assistant professor of Computer Science at Columbia University, said:

“The Web is like the Wild West. There’s no oversight of how our data are being collected, exchanged and used.”

Sunlight follows XRay

Prof. Geambasu, together with computer scientists Daniel Hsu and Augustin Chaintreau, and gradute students Yannis Spiliopoulos, Mathias Lecuyer and Riley Spahn, has designed ‘Sunlight’, which builds on its predecessor XRia, which linked adverts shown to Gmail users with text in their emails, and recommendations on YouTube and Amazon with their shopping and viewing patterns.

Sunlight is more sophisticated than XRay. It works at a wider scale and more accurately matches user-tailored ads and recommendations to pieces of data supplied by users, the scientists say.

Previous studies have traced specific ads, product recommendations and prices to precise inputs like search terms, gender, and location, one by one.

The AdFisher tool received attention earlier this year after demonstrating that fake Web users thought to be male job seekers were more likely than their female counterparts to be shown ads for executive jobs when later visiting news sites.

Sunlight, on the other hand, is the first to analyze an array of inputs and outputs simultaneously to form hypotheses that are tested on a separate dataset carved out from the original. Each hypothesis, at the end, and its linked input and output, is rated for statistical confidence.

Prof. Hsu said:

“We’re trying to strike a balance between statistical confidence and scale so that we can start to see what’s happening across the Web as a whole.”

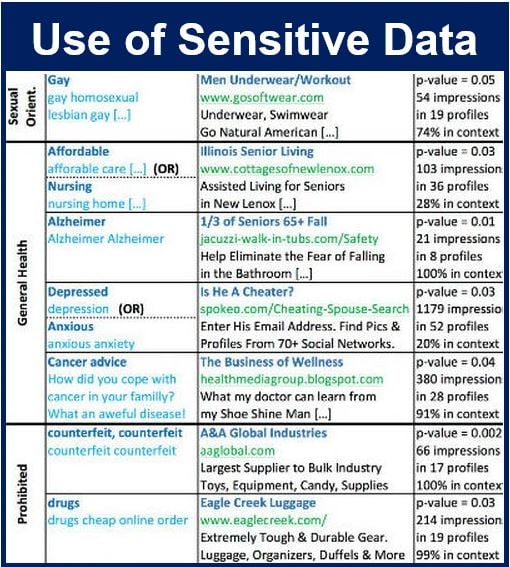

The scientists set up 119 Gmail accounts, and over a period of one month last autumn sent 300 messages with sensitive words in the email’s subject line and body.

Some ads contradicted Google’s policy

Approximately fifteen percent of the ads that followed appeared to be targeted. Some of them seemed to contradict Google’s policy of not targeting ads based on people’s sexual orientation, race, religion, or sensitive financial categories, the team members said.

For example, when the words ‘unemployed’, ‘depressed’, and ‘Jewish’ were typed into the subject line, they triggered ads for ‘easy auto financing’, a service to find ‘cheating spouses’, and a ‘free ancestor’ search, respectively.

Prof. Geambasu and colleagues also set up fake browsing profiles and surfed the Web’s forty most popular sites to see what ads would appear. Only 5% of ads appeared to be targeted. However, some of them seemed to violate Google’s advertising ban on goods and services facilitating drug use.

For example, when visiting ‘hightimes.com’, they saw an ad for bongs at AquaLab Technologies.

Algorithms can sense your left or right political sympathies

The algorithms also appear to pick up on the reader’s political leanings when visiting popular news sites. Fox News readers got Israeli bonds, while those on Huffington Post were presented with an anti-Tea Party candidate.

This does not necessarily mean that Google and other companies are deliberately using sensitive data to target ads and recommendations, the researchers caution. They explain that the flow of personal data on the Web has become so complex, that even the companies themselves may have no idea that targeting is actually taking place.

Google abruptly shut down Gmail ads on November 10th, 2014 – that was the last day Prof. Geambasu and team were able to gather data. The ads appear to have been replaced by ‘organic ads’ displayed in the promotions tab.

Sunlight, they say, has the ability to detect targeting in those ads too. However, the researchers have not yet tested this.

Prof. Geambasu says Sunlight’s intended audience is journalists, watchdogs and regulators. The tool allows them to explore how personal data is being used and determine where closer investigation or monitoring is required.

Prof. Chaintreau said:

“In many ways the Web has been a force for good, but there needs to be accountability if it’s going to remain that way.”

Anupam Datta, a scientist at Carnegie Mellon who led the development of the AdFisher tool and was not involved in this current study, said:

“Sunlight is distinctive in that it can examine multiple types of inputs simultaneously (e.g., gender, age, browsing activity) to develop hypotheses about which of these inputs impact certain outputs (e.g., ads on Gmail). This tool takes us closer to the critical goal of discovering personal data use effects at scale.”

Citation:

“Sunlight: Fine-grained Targeting Detection at Scale with Statistical Confidence,” Mathias Lecuyer, Riley Spahn, Yannis Spiliopoulos, Augustin Chaintreau, Roxana Geambasu, and Daniel Hsu. DOI: http://dx.doi.org/10.1145/2810103.2813614.