Scientists have developed an algorithm they plan to turn into a photo app that is so smart it can make your pics more memorable, and tell you which photos are more forgettable. Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) say their algorithm can tell you how memorable or forgettable a picture is, and makes suggestions on making it more impacting.

The scientists believe their creation could be useful for logo designers and improving advertising content.

Lead author, graduate student Aditya Khosla and team created MemNet (the algorithm), which they say is able to predict how memorable a picture will be. Using MemNet they plan to create an app that subtly tweaks the photo to improve it – specifically, to make it more memorable.

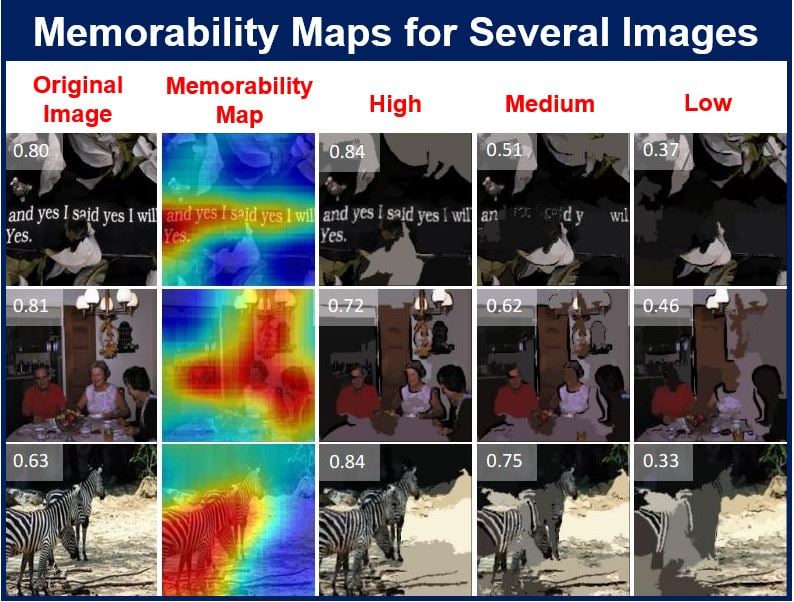

The memorability maps above are shown in the jet colour scheme, ranging from red to blue (highest to lowest). The memorability maps are independently normalized to lie from 0 to 1. The numbers in white are the memorability scores of each image. (Image: people.csail.mit.edu)

The memorability maps above are shown in the jet colour scheme, ranging from red to blue (highest to lowest). The memorability maps are independently normalized to lie from 0 to 1. The numbers in white are the memorability scores of each image. (Image: people.csail.mit.edu)

For each picture, the algorithm also makes a heat map that shows you exactly which parts of that pic are the most memorable. Members of the public are invited to upload their own photos online to see how it works.

Mr. Khosla explained:

“Understanding memorability can help us make systems to capture the most important information, or, conversely, to store information that humans will most likely forget. It’s like having an instant focus group that tells you how likely it is that someone will remember a visual message.”

MemNet algorithm has several possibilities

The scientists say they can envisage several applications for MemNet, from improving the content of adverts, social media posts, developing better quality teaching materials, to creating your own personal ‘health’ assistant device that makes sure you don’t forget things.

Part of the project involved publishing the biggest image-memorability dataset in the world – called LaMem. It has a database containing 60,000 images, each annotated with detailed metadata regarding qualities including popularity and emotional impact.

Mr. Khosla and colleagues say LaMem is their effort to encourage further studies on what they believe is an under-studied area of computer vision.

The paper (citation at bottom of page) was co-written by Aude Oliva, Associate Professor of Cognitive Neuroscience at MIT, senior investigator of the project, and fellow CSAIL graduate student Akhil Raju.

The algorithm creates a heat map. (Image: people.csail.mit.edu)

The algorithm creates a heat map. (Image: people.csail.mit.edu)

Mr. Khosla presented the research and its achievements, i.e. the paper, at the International Conference on Computer Vision at the Convention Center in Santiago, the capital of Chile.

About the algorithm

A previous algorithm for facial memorability had been created by the same team. However, this new one is unique in that it uses techniques from deep learning, an artificial intelligence (AI) field that uses ‘neural networks’ – systems that teach computers to sift through enormous quantities of data to seek out and identify patterns all on their own, i.e. with no human help.

Several applications use this system today, including Apple’s Siri, an inbuilt intelligent assistant installed in mobile devices that obeys your voice commands, as well as Google’s auto-complete and Facebook’s photo-tagging feature.

Prof. Oliva explained:

“While deep-learning has propelled much progress in object recognition and scene understanding, predicting human memory has often been viewed as a higher-level cognitive process that computer scientists will never be able to tackle. Well, we can, and we did!”

Neural networks work on what the underlying correlations or causes might be, with no human guidance. They are organized in several processing unit layers – each one performing random computations on the data successively. As the network gathers more data, it readjust to produce more accurate predictions.

The algorithm was fed many tens of thousands of images by the researchers, from several different datasets including LaMem, and the scene-oriented SUN and Places. Each image had been given a ‘memorability score’ based on people’s ability to remember them during online experiments.

Researchers pitted algorithm against human subjects

Mr. Khosla and colleagues then set their own algorithm against human subjects by tasking the model to predict how memorable a group of human beings would find a new photograph that had never been seen before.

Its predictions were found to be thirty percent more accurate than current algorithms, and were only a few percentage points below the average human performance.

The algorithm creates a heat map for each picture, which shows which parts of the pic are most memorable. It highlights the different regions that could potentially enhance the memorability of the image.

Associate professor of computer science at the University of California at Berkeley, Alexei Efros, said:

“CSAIL (MIT’s Computer Science and Artificial Intelligence Laboratory) researchers have done such manipulations with faces, but I’m impressed that they have been able to extend it to generic images. While you can somewhat easily change the appearance of a face by, say, making it more ‘smiley,’ it is significantly harder to generalize about all image types.”

Looking into the future – updating the system

The researchers said their study also unexpectedly provided some insights into the nature of human memory. Mr. Hosla says he had wondered whether the human participants would remember everything placed in front of them if they were shown only the most memorable pictures.

Mr. Khosla said:

“You might expect that people will acclimate and forget as many things as they did before, but our research suggests otherwise. This means that we could potentially improve people’s memory if we present them with memorable images.”

The researchers said they plan to update the system so that it is able to predict the memory of a specific individual, as well as to better target it for ‘expert industries’ such as retail clothing and logo design.

Prof. Efros said:

“This sort of research gives us a better understanding of the visual information that people pay attention to. For marketers, movie-makers and other content creators, being able to model your mental state as you look at something is an exciting new direction to explore.”

The project was financed by grants from the National Science Foundation, the McGovern Institute Neurotechnology Program, the MIT Big Data Initiative at CSAIL, and Xerox and Google research awards. Nvidia donated software.

Reference: “Understanding and Predicting Image Memorability at a Large Scale,” Aditya Khosla, Aude Oliva, Akhil S. Raju and Antonio Torralba. International Conference on Computer Vision (ICCV), 2015.