Your task is to create an artificial intelligence which is as fast as the human brain, the US Federal Government told Harvard University when it was awarded a grant of $28 million. The money has gone to Harvard’s John A. Paulson School of Engineering and Applied Sciences, its Department of Molecular and Cellular Biology, and its Center for Brain Science.

The three faculties will now develop new machine learning algorithms by ‘pushing the frontiers of neuroscience’. Current artificial intelligence is incredibly slow at recognising things.

IARPA (Intelligence Advanced Research Projects Activity) funds large-scale research projects aimed at addressing the most difficult challenges facing the US intelligence community.

Imagine what a bio-inspired artificial intelligence is capable of. The aim is to make it as smart or even superior to the human brain. (Image: media.news.harvard.edu)

Imagine what a bio-inspired artificial intelligence is capable of. The aim is to make it as smart or even superior to the human brain. (Image: media.news.harvard.edu)

Intelligence staff drowning in flood of data

Intelligence agencies are drowning in a flood of data – far more than they are capable of analysing in a reasonable and practical amount of time.

The human brain is innately good at detecting patterns. However, intelligence staff cannot keep pace with the rate at which new information comes in.

Artificial intelligence that is currently available, is the basis for much of the progress in automation, but is inferior to even the most basic mammalian brains when it comes to recognizing patterns – and is a trillion light years behind what our brains can do.

IARPA has tasked Harvard with finding out why our brains are so good at learning, and then using that information to design computer systems that are able to interpret, analyse, and learn information as rapidly we can.

The aim is to make an ultra-smart AI

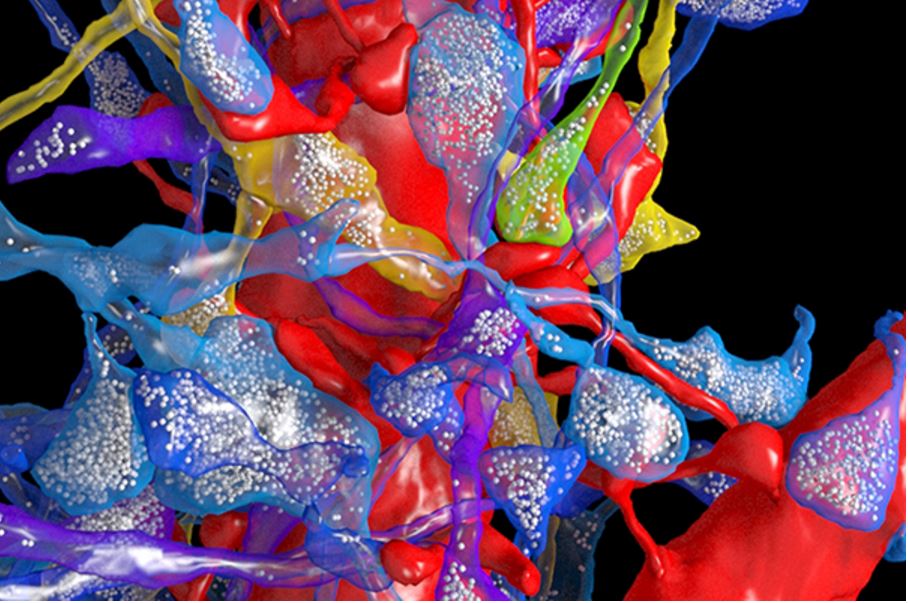

Harvard engineers and scientists will record activity in the human brain’s visual cortex in unprecedented detail, then map its connections at a scale never attempted before. If they can reverse-engineer that data successfully, they should in theory have an ultra-smart artificial intelligence (AI) with super computer algorithms for learning.

The new artificial intelligence should be able to navigate, for example, through a crowd as easily as a human or guide dog. (Image: cs.washington.edu)

The new artificial intelligence should be able to navigate, for example, through a crowd as easily as a human or guide dog. (Image: cs.washington.edu)

The ideal target would be to create an AI that can match the human brain at detecting and understanding patterns – or perhaps even outperform us.

Project leader David Cox, assistant professor of molecular and cellular biology and computer science, said:

“This is a moonshot challenge, akin to the Human Genome Project in scope. The scientific value of recording the activity of so many neurons and mapping their connections alone is enormous, but that is only the first half of the project.”

“As we figure out the fundamental principles governing how the brain learns, it’s not hard to imagine that we’ll eventually be able to design computer systems that can match, or even outperform, humans.”

These systems could be created to drive cars, read MRI images, detect network invasions, or anything in between.

A dog’s brain is far superior to current AIs. It can spot its owner immediately, without the need to see hundreds of images first. It can even instantly tell whether a human is happy or upset. (Image: vetmeduni.ac.at)

A dog’s brain is far superior to current AIs. It can spot its owner immediately, without the need to see hundreds of images first. It can even instantly tell whether a human is happy or upset. (Image: vetmeduni.ac.at)

The research team that will tackle this challenge includes Hanspeter Pfister, the An Wang Professor of Computer Science; Jeff Lichtman, the Jeremy R. Knowles Professor of Molecular and Cellular Biology; Ryan Adams, assistant professor of computer science; and Haim Sompolinsky, the William N. Skirball Professor of Neuroscience. Researchers from Notre Dame, New York University, MIT, Rockefeller University, the University of Chicago will also collaborate.

All starts off with lab rats

The multi-stage project starts in Prof. Cox’ lab, where rats will be trained to visually recognize a series of objects on a computer monitor.

While the rats are learning, Dr. Cox and team will record the activity of visual neurons using state-of-the-art laser microscopes created for this project with scientists at Rockefeller, to determine how brain activity changes.

Is this the first step towards a James Bond-type cyborg spy? (Image: thirkanislair.blogspot.mx)

Is this the first step towards a James Bond-type cyborg spy? (Image: thirkanislair.blogspot.mx)

Then 1 cubic millimeter of brain will be sent down the hall to Prof. Lichtman’s lab, where it sill be cut into ultra-thin slices and imaged under the first multi-beam scanning electron microscope in the world, housed in the Center for Brain Science.

Prof. Lichtman said:

“This is an amazing opportunity to see all the intricate details of a full piece of cerebral cortex. We are very excited to get started but have no illusions that this will be easy.”

A one-petabyte data mountain

This challenging process should generate more than one petabyte of data. One petabyte equals either 250 bytes, 1024 terabytes, or a million gigabytes – roughly equivalent to 1.6 million CDs’ worth of information.

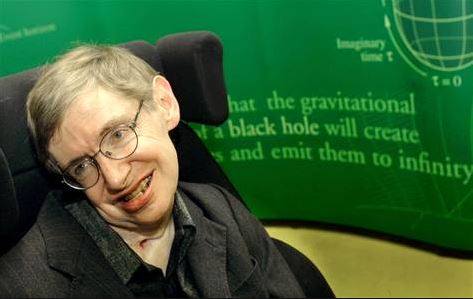

If we develop a super-smart artificial intelligence one day that keeps improving itself (upgrading) rapidly – it could eventually overtakes us. Is there a chance it might see us as either a threat to the planet or an inconvenience, and decide to destroy us? Those are one of Prof. Stephen Hawking’s concerns.

If we develop a super-smart artificial intelligence one day that keeps improving itself (upgrading) rapidly – it could eventually overtakes us. Is there a chance it might see us as either a threat to the planet or an inconvenience, and decide to destroy us? Those are one of Prof. Stephen Hawking’s concerns.

This mega trove of data will then be sent to Prof. Pfister, whose algorithms will reconstruct synapses, cell boundaries, and connections, and visualize them in 3-D.

Prof. Pfister said:

“This project is not only pushing the boundaries of brain science, it is also pushing the boundaries of what is possible in computer science. We will reconstruct neural circuits at an unprecedented scale from petabytes of structural and functional data.”

“This requires us to make new advances in data management, high-performance computing, computer vision, and network analysis.”

Why can mammals recognise things so fast?

If they only got this far, the scientific impact would already be huge – but they will continue forward. As soon as the scientists know how visual cortex neurons are interlinked in 3-D, they will then figure out how the brain utilizes these connections to rapidly process data and infer patterns from new stimuli.

One of the greatest challenges that computer scientists currently have is the amount of training data that deep-learning systems need. For example, for an AI to recognize a car, its computer system needs to see literally hundreds of thousands of vehicles.

However, humans, apes and other mammals don’t work that way. We don’t need to see something hundreds of thousands of times in order to recognize it – we only need to see it a few times. How many times does a dog have to ‘see’ its master before recognizing him or her?

In the later phases of the project, researchers at Harvard and collaborating centres will create computer algorithms for learning and pattern recognition that are inspired and constrained by the *connectomics data.

*According to Boston University’s Nerve Blog:

“Connectomics is the study of the structural and functional connections among brain cells; its product is the ‘connectome’, a detailed map of those connections. The idea is that such information will be monumental in our understanding of the healthy and diseased brain.”

Bio-inspired computer algorithms

These computer algorithms, which will be biologically inspired, will hopefully outperform current computer systems in their ability to recognize patterns and make inferences from much more limited data inputs.

In other words, to recognize a car, they won’t need to look at hundreds of thousands of vehicles – their thought processes will be more similar to ours.

This research, among other things, may improve the performance of computer vision systems that can help robots see and navigate through complex environments.

Prof. Cox said:

“We have a huge task ahead of us in this project, but at the end of the day, this research will help us understand what is special about our brains.”

“One of the most exciting things about this project is that we are working on one of the great remaining achievements for human knowledge – understanding how the brain works at a fundamental level.”