MIT researchers have devised a way of helping robots navigate environments more like we do. In other words, find their way by learning along the way. When we move through a crowd to reach an end goal, we can usually navigate the space safely. We can also navigate it without thinking too much.

We learn from other people’s behaviors and remember which obstacles or dangers to avoid. How robots navigate, on the other hand, is very different. They struggle with our navigational concepts.

Helping robots navigate by exploring environment

MIT researchers’ new motion-planning model lets robots determine how to get to a goal by exploring their environment. They also observe other agents and exploit what they had learned previously in similar situations.

Andrei Barbu and colleagues have written a paper about their model (citation below). They presented it at the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). The conference took place in October 1st-to-5th, 2018, Madrid, Spain.

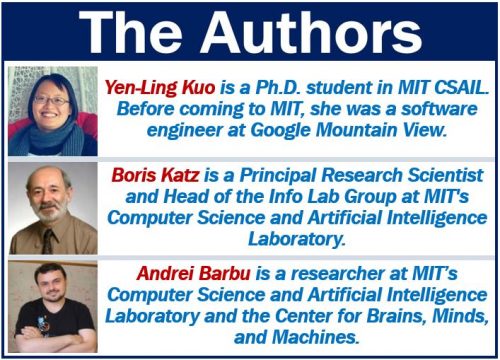

Dr. Barbu is a researcher at MIT’s Computer Science & Artificial Intelligence Laboratory (CSAIL). He also works at MIT’s McGovern Institute’s Center for Brains, Minds, and Machines (CBMM). Co-authors are Boris Katz and Yen-Ling Kuo. Dr. Katz is a principal research scientist and head of the InfoLab Group at CSail. Kuo, first author, is a Ph.D. student at CSAIL.

The term ‘artificial intelligence‘ refers to software technologies that make robots or computers think like humans do. It also makes them behave like humans. We commonly use the abbreviation ‘AI,’ which stands for artificial intelligence.

Motion-planning algorithms

Most motion-planning algorithms today create a tree of possible decisions. The tree branches out and finds ideal paths for navigation.

Robots navigate their way, for example, to a door by creating a step-by-step search tree of potential movements. They subsequently execute the best route to the door, considering all the possible constraints.

Algorithms – one major disadvantage

These algorithms have one major drawback – they rarely learn. Robots navigate without being able to leverage information about how they had acted previously in similar situations and environments. Also, they cannot leverage information about how other agents had acted before.

Dr. Barbu said:

“Just like when playing chess, these decisions branch out until [the robots] find a good way to navigate. But unlike chess players, [the robots] explore what the future looks like without learning much about their environment and other agents.”

“The thousandth time they go through the same crowd is as complicated as the first time. They’re always exploring, rarely observing, and never using what’s happened in the past.”

New model helps robots navigate by learning

The research team developed a model that combines a planning algorithm with a neural network. The neural network learns to recognize the best paths.

The neural network uses that knowledge to guide the movement of a robot in an environment.

Robots navigated in two settings

The researchers showed the advantages of their model in two settings.

- First, navigating through challenging rooms with narrow passages and traps.

- Second, navigating areas while avoiding bumping into other agents.

A real-world application which shows promise is helping driverless cars navigate intersections. At an intersection, the car must quickly evaluate what other agents will do before it merges into the traffic.

At the Toyota-CSAIL Joint Center, the researchers are currently pursuing such applications.

Kuo said:

“When humans interact with the world, we see an object we’ve interacted with before, or are in some location we’ve been to before, so we know how we’re going to act.”

“The idea behind this work is to add to the search space a machine-learning model that knows from past experience how to make planning more efficient.”

Citation

“Deep sequential models for sampling-based planning,” Yen-Ling Kuo, Andrei Barbu, Boris Katz. arXiv:1810.00804 (cs.RO), Submitted on 1 Oct 2018. arXiv (pronounced ‘archive’) is a repository of e-prints (electronic papers) approved for publication after their moderation.